Real estate tips and trends

Get the latest articles on real estate business, tech and productivity delivered to your inbox.

By submitting this form you agree to us contacting you and using your details for marketing purposes as per our privacy policy.

Latest articles from Rex

March 19, 2024

—

3

Minute Read

Managing your financial workflows has never been easier, thanks to the new integration between Rex Sales & Rentals CRM and Xero.

March 1, 2024

—

3

Minute Read

The enhanced Reminders feature within Rex Sales & Rentals CRM is tailored to improve task management and ensure comprehensive oversight across all agency operations.

February 22, 2024

—

2

Minute Read

Rex Software's CEO, Davin Miller, announces UK team expansion by 38% and shares insights from his first visit, highlighting the UK's growing importance.

February 20, 2024

—

4

Minute Read

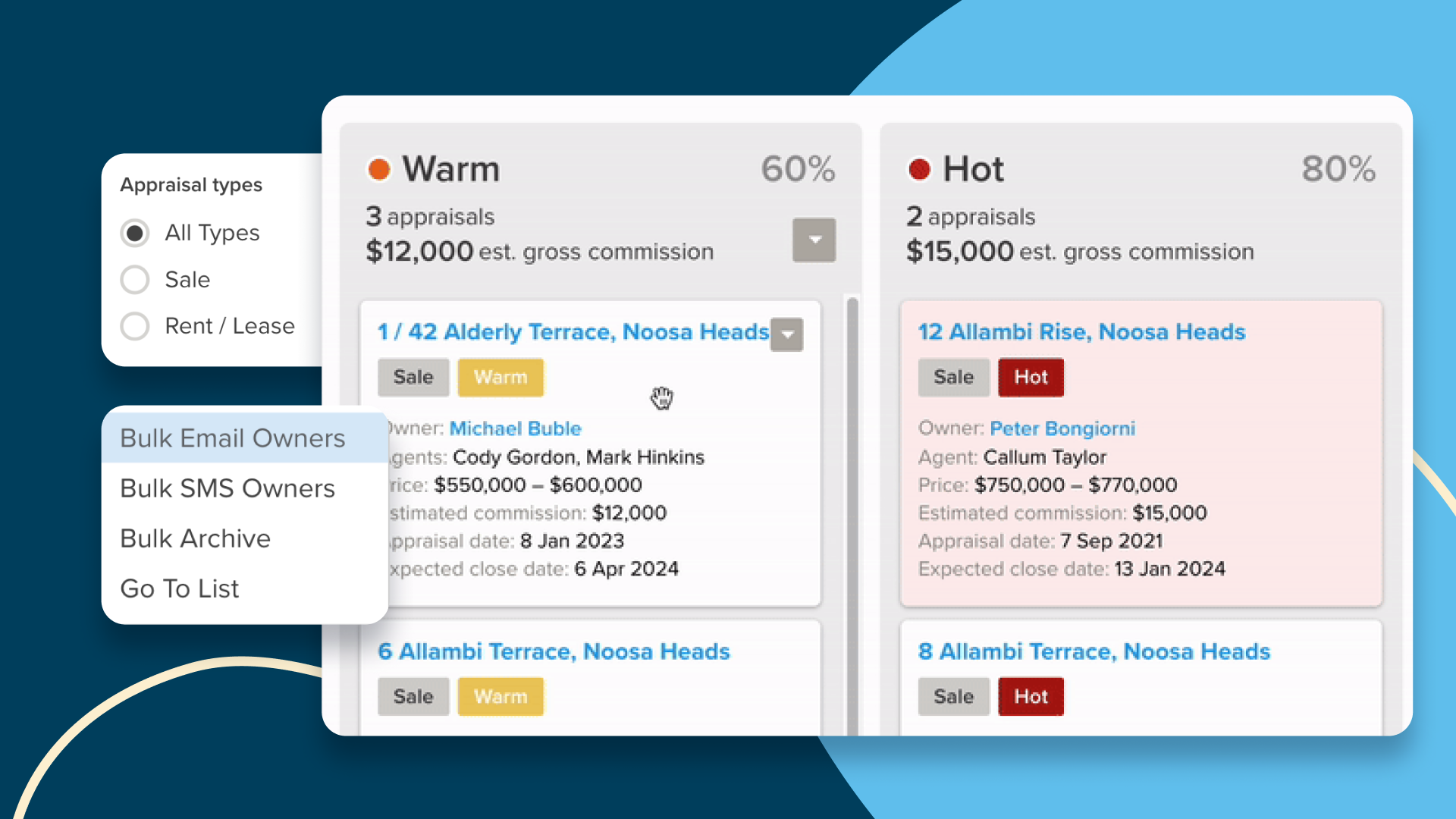

Rex Sales & Rentals CRM’s newest feature, will help you manage, forecast, and engage in the journey from appraisal to listing to help keep you focused on the right opportunities at the right time.

February 14, 2024

—

4

Minute Read

Valentine's Day reminds us of the importance of relationships in real estate. CRM systems like Rex revolutionise buyer-seller matching, enhancing efficiency and personalised service in the industry.

February 1, 2024

—

2

Minute Read

In the intensely competitive world of real estate, having the right tools is crucial. Rex CRM equips real estate businesses to manage their operations efficiently.

January 16, 2024

—

6

Minute Read

In this article, we explore the AI-driven tools enhancing everyday real estate operations, the benefits for early adopters, and what we can anticipate from AI in the future.

October 30, 2023

—

5

Minute Read

Discover how Rex Reach's tailored approach outshines generic boosted posts, offering broader reach, precise targeting, and a superior ROI for real estate

October 13, 2023

—

5

Minute Read

Beyond convenience, standardising agency processes is your golden ticket to ramping up referrals, streamlining your time, elevating agency insights, and pocketing more commission.

October 6, 2023

—

4

Minute Read

Are you measuring 'Speed to Lead' in your agency? Discover the impact of response times on lead conversion, insights from Homeflow's study, and the future role of AI in optimising processes.

September 27, 2023

—

3

Minute Read

In an economic market that's shaking homebuyer confidence, the true strength of real estate agents doesn't lie in market trends or advertising tactics but in genuine human relationships.

September 20, 2023

—

3

Minute Read

In this article, we explore how pivoting from GCI to volume can be the key to navigating the market's challenges and propelling growth.

September 7, 2023

—

5

Minute Read

These seven CRM strategies, ranging from optimising your calendar to automating mundane tasks, are the building blocks to not just a productive but also a profitable real estate business.

August 29, 2023

—

3

Minute Read

As the Australian property market anticipates a 2-5% price surge by 2024, the real battleground for agents shifts to the digital realm.

August 24, 2023

—

5

Minute Read

In the complex dance of decision-making, the 'Region-Beta Paradox' reveals a startling truth: we often tolerate minor inconveniences longer than major ones. For estate agents, this means clinging to '

August 23, 2023

—

5

Minute Read

Learn why focusing on relationships rather than transactions leads to long-term success and resilience in the ever-changing real estate industry.

August 17, 2023

—

3

Minute Read

Don't miss this vibrant celebration on August 24th, 2023, at The Triffid. Get your tickets now and support the bands and Beyond Blue charity.

August 15, 2023

—

5

Minute Read

Explore the vital role of digital marketing in modern estate agency. In this article we address why estate agents must embrace digital strategies to stay competitive.

August 14, 2023

—

4

Minute Read

Real estate trust accounting plays a crucial role in maintaining transparency, accountability, and compliance within the real estate industry...

.webp)

.png)